Yérali Gandica

February 3rd 2022, 2pm; Room 26-00/332

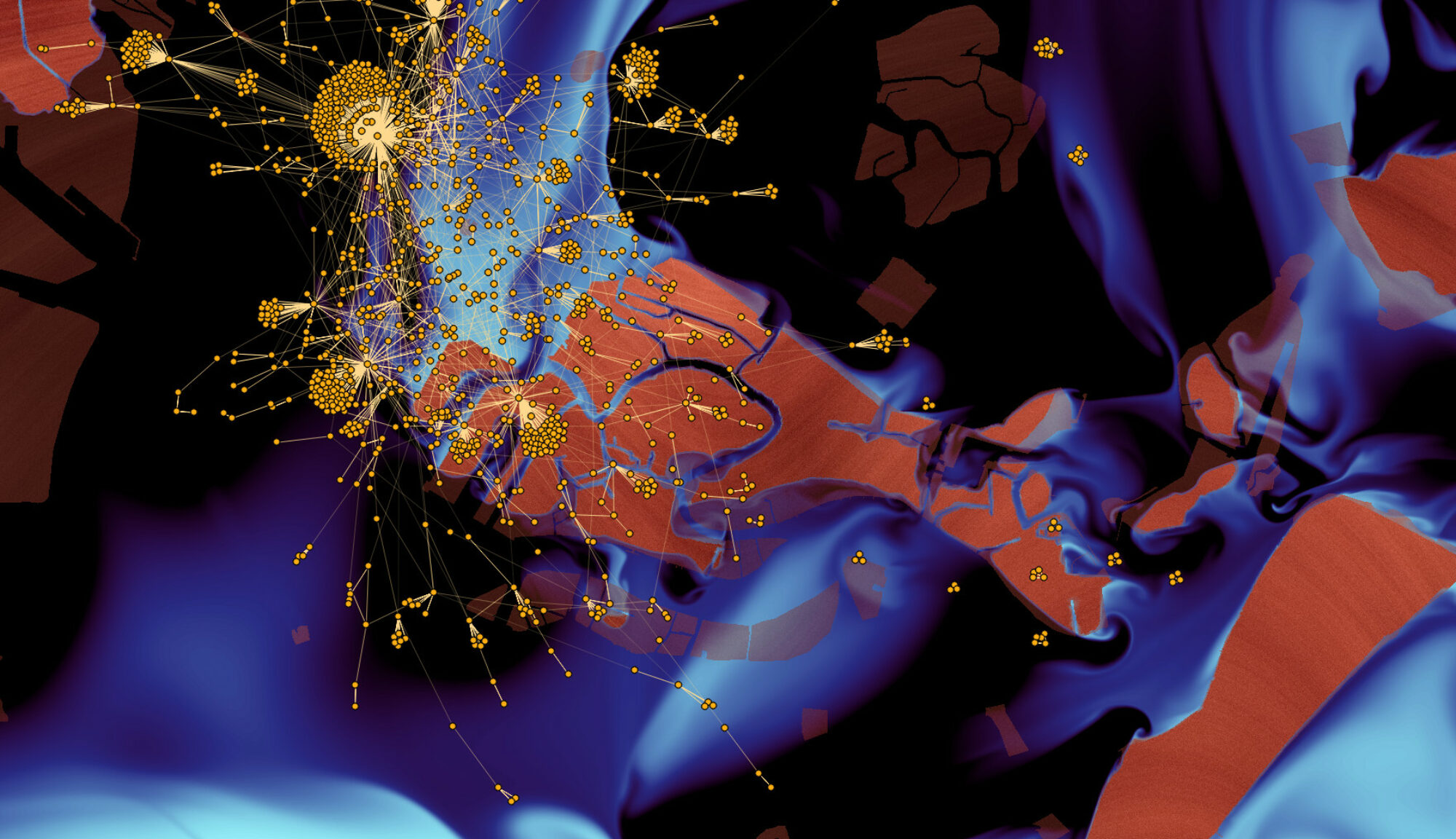

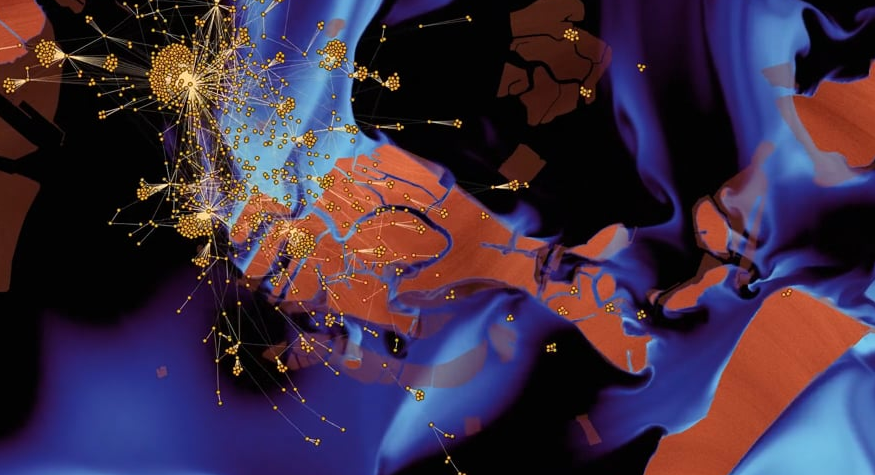

In this talk, I will present some of my publications using Complex Systems approaches to understand the emergence of socio-economic phenomena. Complex System science aims to study the phenomena that emerge from the interactions between the constituents and, thus, cannot be understood by studying a singular, isolated component. The field has incorporated concepts and methods derived from many areas, ranging from statistical physics and biology to economics and sociology, which, in a constant process of cross-fertilization, have given rise to new types of questions framed into the field of Complex Systems. The study of interacting particle systems has, for along time, been an essential subject of physics. The use of statistical methods has allowed for significant advances in this area by providing a bridge between the microscopic interactions and the sizeable collective behaviour of the system. The application of the methods of statistical physics to social phenomena, where the interacting particles are now interacting human beings, has proven to be very fruitful in allowing for the understanding of many features of social collective behaviour. The success of the statistical physics approaches to explain social data is currently attracting much interest, as demonstrated by the rapidly increasing number of publications in the field from both natural and social scientists. Some of the works that I will present are based on simulations, while some others are based on real data. In some cases, the comparison between simulations and real data is achieved. I will also explain some works in progress and projects for the incoming medium and long term to give a flavour about the scientific path that I plan, to illustrate the coherence of my scientific line of research.